Facebook Account Hacked

Today my Facebook account was hacked. Messages were sent to 42 of my friends, with a random subject and contents of the form:

hi! <recipient's first name>! <link>

All of the messages were shown as sent via Facebook Mobile, which, to my knowledge, I have never used.

I did several things:

- I posted on my Facebook wall advising people not to open these messages.

- I reported the intrusion to Facebook.

- I changed my Facebook password.

- Replied manually to every message sent warning people not to click on the links.

Below is the reply from Facebook. I've not replied yet, but it's frustrating that Facebook have not listened to a word I've said.

Subject: Re: Messages or Posts Were Sent From My Account, and I Didn't Send Them Hi, We have detected suspicious activity on your Facebook account and have reset your password as a security precaution.

Er... I told you about it. You're replying to an e-mail which I sent you about it. Detected my arse.

It is possible that malicious software was downloaded to your computer or that your password was stolen by a phishing website designed to look like Facebook. Please carefully follow the steps provided: 1. Run Anti-Virus Software: If your computer has been infected with a virus or with malware, you will need to run anti-virus software to remove these harmful programs and keep your information secure. For Microsoft http://www.microsoft.com/protect/viruses/xp/av.mspx http://www.microsoft.com/protect/computer/viruses/default.mspx For Apple http://support.apple.com/kb/HT1222

As I told you in my e-mail, I run Linux and it is up-to-date.

2. Reset Password: From the Account Settings page, you will need to create a new password. Be sure that you use a complex string of numbers, letters, and punctuation marks that is at least six characters in length. It should also be different from other passwords you use elsewhere on the internet. Here is your new login information: <redacted>

As I told you in my e-mail, I have already changed my password. Changing it again and sending it to me in cleartext e-mail is actually making the security of my account worse.

3. Secure Email: Make sure that any email addresses associated with your account are secure, since anyone who can read your email can probably also access your Facebook account. If you believe someone has accessed one of your email accounts, you should change its password.

As I told you in my e-mail, I don't believe anyone has access to my e-mail.

4. Never Click Suspicious Links: It is possible that your friends could unknowingly send spam, viruses, or malware through Facebook if their accounts are infected. Do not click this material and do not run any .exe files on your computer without knowing what they are. Also, be sure to use the most current version of your browser as they contain important security warnings and protection features.

As I said in my e-mail, my operating system is Linux and it is up-to-date. I cannot run any .exe files without serious difficult. In practical terms, it is very unlikely to have been compromised.

5. Log in at Facebook.com: Make sure that when you access the site, you always log in from a legitimate Facebook page with the facebook.com domain. If something looks or feels suspicious, go directly to www.facebook.com to log in.

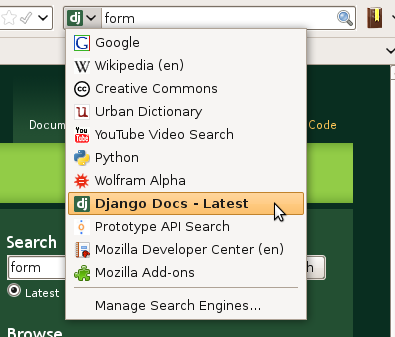

Please. If I want to visit Facebook I select it from the AwesomeBar. I don't even receive e-mails from Facebook any more because I've disabled them, so I'd spot a phishing attack a mile off.

6. Learn More: Please visit the following page for further information about Facebook security and information on reporting material http://www.facebook.com/security

Wow, practical.

Finally, if this did not resolve your issue, please revisit the Help Center to select the appropriate contact form and submit a new inquiry: http://www.facebook.com/help/?ref=pf

So that you can ignore what I say all over again?

Thanks, The Facebook Team

Thanks for nothing.

These e-mails include random links, and it's probably that the nature of the attack could be uncovered by finding out more about what these links contain. It seems very probable that the page you would see will try in some way to continue the attack. That is the definition of a worm: an attack that propagates itself over the network. I tried downloading the contents of a link with wget. It timed out.

Worms are not unknown on Facebook. As always, think very carefully before clicking on untrusted links, installing untrusted apps, and check carefully that the site you are entering your credentials into is the one you expect.

My thanks to Sammy and Marit for alerting me to the attack.